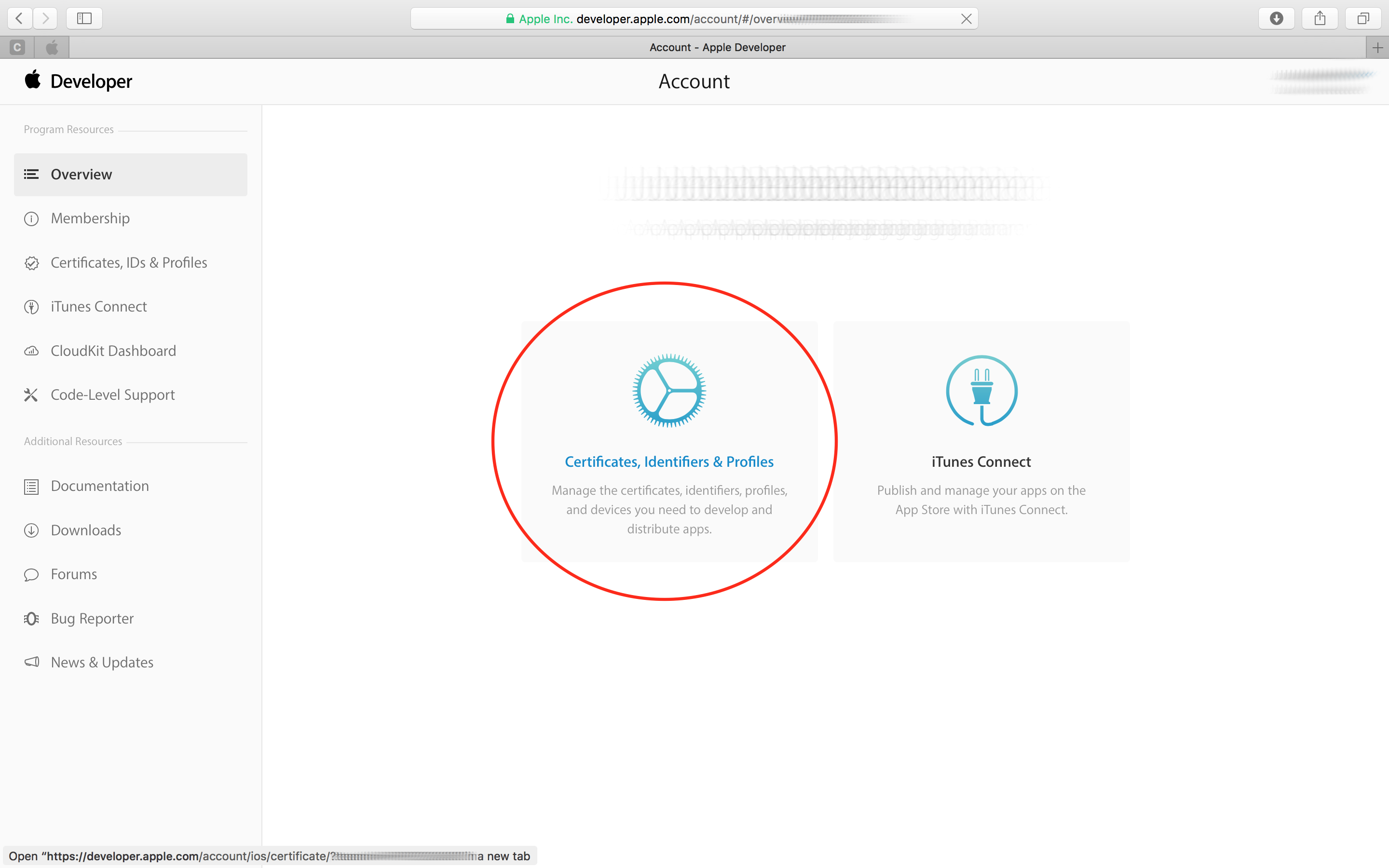

Apple developer mode

With these two optimizations, we can already see obvious performance improvements compared with the default mode. Also, the rate controller in this mode has a faster adaptation in response to the network change, so the delay caused by network congestion is minimized as well. A one in, one out encoding pattern is followed. To minimize the processing time, the low-latency mode eliminates frame reordering. As we may notice from the previous diagram, the end-to-end latency can be affected by two factors: the processing time and the network transmission time. The output compressed data is wrapped in CMSampleBuffer, and it can be transmitted through network for video communication. It asks the video encoder to perform compression algorithms such as H.264 to reduce the size of raw data. The Video Toolbox takes the CVImagebuffer as the input image. Here is a brief diagram of a video encoder pipeline on Apple’s platform.

/cdn.vox-cdn.com/uploads/chorus_asset/file/22495868/apple_airtags_developer_menu.jpg)

Let me first give an overview on low-latency video encoding. Finally, I will talk about multiple features we are introducing in low-latency mode.

Apple developer mode how to#

Then I’m going to show how to use VTCompressionSession APIs to build the pipeline and encode with low-latency mode. We can have the basic idea about how we achieve low latency in the pipeline. In this talk, first I’m going to give an overview of low-latency video encoding. With this mode, your real-time application can achieve new levels of performance. The low-latency video encoding that I’m going to talk about today will optimize in all these aspects. We need a reliable mechanism to recover the communication from errors introduced by network loss. The app needs to present the video in its best visual quality. The encoder pipeline should be efficient when there are more than one recipients in the call. We need to enhance the interoperability by letting the video apps capable of communicating with more devices. So what does a real-time video application require? We need to minimize the end-to-end latency in the communication so that people won’t be talking over each other. The goal of this new mode is to optimize the existing encoder pipeline for real-time applications. In this talk, I’m going to introduce a new encoding mode in Video Toolbox to achieve low-latency encoding. Welcome to “Exploring low-latency video encoding with Video Toolbox.” The low-latency encoding is very important for many video applications, especially real-time video communication apps. My name is Peikang, and I’m from Video Coding and the Processing team.